Jan

7

Thoughts on Irrationality on Bets, from Leo Systrader

January 7, 2012 |

We perhaps have all heard about the following coin toss experiments on people. On each experiment, people have to choose either to play Game A or Game B.

We perhaps have all heard about the following coin toss experiments on people. On each experiment, people have to choose either to play Game A or Game B.

Experiment 1): Game A: the player wins $1000 if head; wins $50 if tail. Game B: the player wins $500 regardless.

Experiment 2): Game A: the player loses $1000 if head; loses $50 if tail. Game B: the player loses $500 regardless.

With Experiment 1), people like to choose Game B.

With Experiment 2), people like to choose Game A.

While I understand this from psychological terms (humans have biases, are not rational etc.), I don't quite understand naturally or fundamentally about what really makes us do this. Hope someone can help explain.

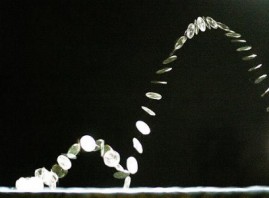

An interesting thing I want to share here is the TED talk (July 2010) below shows that monkeys do in the same way.

Phil McDonnell writes:

These sorts of thought experiments are played on student volunteers at college campuses. Students are notoriously impecunious. Assume a net worth of $300 or so and then calculate the log of the wealth ratio for each outcome. You will find that the most popular outcomes all follow the log utility function.

The mistake the experimenters make is to assume that $1000 is worth twice as much as $500 to a poor student. In fact it is worthless than twice as much. They assume a linear utility function when a non-linear log function is how humans really value things.

Gary Rogan writes:

To understand why any of these biases work the way they do it's best to imagine hominids barely making it in terms of survival rather than even poor college students. There were long stretches where there were more than enough food or other resources, but there were also "funnels" where there were barely enough resources to make it through. We are all descended from the ones who made it through the "funnels". The ones who made the wrong bet left no survivors (or fewer as the case may be).

So if you've got just enough or almost enough to sustain yourself for the next few days but not after that, you realize that you need to do something soon or else you'll be history after those few days. You need to take a risk, but the weather is really bad, it's cold and stormy (or exceptionally hot, or whatever) and you can't venture out. To make matters worse though, a mildly menacing counterpart shows up and "asks" to share your meager resources. Losing half of what you have with great certainty may very well mean likely death, because you will probably be not in a position to "play" again after the weather gets better. If you calculate a 50/50% chance of winning the fight with your "friend" you will probably go for it, even if it means either a complete loss of your resources (and perhaps your life, but those are now equivalent anyway) or a mild injury.

And now a few days are gone, and your are out of resources anyway. The weather is a little better and you are now faced with walking towards a distant meadow where you are certain to find enough berries to sustain yourself for a few more days or pursuing a large but elusive prey (or trying to take something from a leopard resting in a tree with a fresh catch, or trying to fight it out with a different "friend" for his resources, but all of this uncertain), you go for the berries. You take a certain smaller gain vs. an uncertain larger one when getting enough resources means the difference between life and death.

As for how it comes about, we have neural networks in our brain specifically dedicated to evaluating rewards and costs. That's as proven of a fact as anything in neurobiology. Whatever biases worked based to get our distant (and not so distant) ancestors through the "funnels", that's pretty much what we have today.

Phll McDonnell adds:

I think one point that is overlooked in this discussion is that insurance companies do not prefer big bets. Instead they prefer to spread the risk and average out on many small bets. It is also the same reason that earthquake and hurricane insurance is so overpriced. They simply do not want highly correlated bets which increases the risk of serous capital impairment. If they write a lot of earthquake insurance in an area and the big one hits all policies come due at the same time.So they either ration the insurance by charging too much or they simply refuse to sell more than a certain number of policies in a given area.

In a way managing the insurance portfolio is a lot like managing a stock portfolio. You want to avoid bets with large possible negative outcomes and you want to avoid correlated bets. Rather you should take many smaller uncorrelated positions so no one position can wipe you out.

Leo Systrader comments:

Great points here. Many thanks for all the thoughts.

Question to Philip (maybe to everyone) about the non-linearity of human value. It is understandable, but I wonder if there is any scientific conclusion about it.

Let's see if we can use this theory to re-construct the experiment. Let's also assume the net worth of the players is $500. For simplicity, let's just try Experiment 1).

Experiment 1): Game A: the player wins $X if head; wins $Y if tail. Game B: the player wins $500 regardless.

We hope we can come up with an X value that is attractive enough for the players to choose Game A. Also we need to make sure that Game A does not have a significant favor of probability, so we choose Y in such a way that the expected value of Game A is not much more than that of Game B, which is $500. Apparently X will need to be much larger than 1000, so that means Y will have to be a negative number to balance out.

As the theory suggests, to make log(X) = 2 * log(500), X is about 25,000. Let's make the expected value be 600, we get Y to be -23800.

So then Game A becomes: the player wins $25000 if head; loses $23800 if tail.

Would that make people choose Game A? Not to me. Anything wrong in the above analysis?

On the other hand, we know that Kahneman has a theory saying something like "a person's magnitude of pain from losing amount D equals that of his joy from gaining amount 2.5*D".

So let's use this theory to reconstruct Game A.

Let's first decide for Y to be 300. Comparing with the 500 in Game B, the player considers a loss of 200 in Game A. So we need to make X a gain of 2.5 times 200 from 500, so we get X = 2.5*200 + 500 = 1000.

So now we have a new Game A: the player wins $1000 if head; wins $300 if tail.

I guess now it is very likely people would choose Game A over Game B. But we note that the expected value of Game A now is $650

If we make Y to be 50. It is a loss of 450 from Game B. So we make X =

2.5*450 + 500 = 1650.

Game A becomes: the player wins $1650 if head; wins $50 if tail.

Would they play it? Probably, right? In this case, the expected value is 837.5.

Phil McDonnell responds:

Assume a starting net worth of 500.

Game A analysis: ln( (500+X) / 500 ) + ln( (500-Y) / 500 ) = expected utility.

Game B analysis: ln( (500 + 500 ) / 500 is the expected utility for both outcomes.

The thing is that we need to think in terms of wealth ration of the different outcomes. Take the natural logs of the wealth ratios. The wealth ratio is the wealth you start with before the bet divided by the wealth you end up with for the given outcome.

I took all the early propositions in the Kahneman and Tversky paper and calculated the logs of the expectations and found that in every case the participants were using log based utility function and were actually choosing quite rationally and correctly. It was the learned professors who were wrongly trying to analyze the problems using binomial probability analysis instead of utility theory.

In other ares of psychology there are various log based perceptions that have bee discovered. For example there is the concept of a just noticeable difference (jnd). For example you do not notice a sound is louder or softer until it changes by a certain amount governed by a log law. Same thing goes for brightness of light. One might add boiling live lobsters to that list.

Comments

Archives

- June 2026

- May 2026

- April 2026

- March 2026

- February 2026

- January 2026

- December 2025

- November 2025

- October 2025

- September 2025

- August 2025

- July 2025

- June 2025

- May 2025

- April 2025

- March 2025

- February 2025

- January 2025

- December 2024

- November 2024

- October 2024

- September 2024

- August 2024

- July 2024

- June 2024

- May 2024

- April 2024

- March 2024

- February 2024

- January 2024

- December 2023

- November 2023

- October 2023

- September 2023

- August 2023

- July 2023

- June 2023

- May 2023

- April 2023

- March 2023

- February 2023

- January 2023

- December 2022

- November 2022

- October 2022

- September 2022

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

- March 2022

- February 2022

- January 2022

- December 2021

- November 2021

- October 2021

- September 2021

- August 2021

- July 2021

- June 2021

- May 2021

- April 2021

- March 2021

- February 2021

- January 2021

- December 2020

- November 2020

- October 2020

- September 2020

- August 2020

- July 2020

- June 2020

- May 2020

- April 2020

- March 2020

- February 2020

- January 2020

- December 2019

- November 2019

- October 2019

- September 2019

- August 2019

- July 2019

- June 2019

- May 2019

- April 2019

- March 2019

- February 2019

- January 2019

- December 2018

- November 2018

- October 2018

- September 2018

- August 2018

- July 2018

- June 2018

- May 2018

- April 2018

- March 2018

- February 2018

- January 2018

- December 2017

- November 2017

- October 2017

- September 2017

- August 2017

- July 2017

- June 2017

- May 2017

- April 2017

- March 2017

- February 2017

- January 2017

- December 2016

- November 2016

- October 2016

- September 2016

- August 2016

- July 2016

- June 2016

- May 2016

- April 2016

- March 2016

- February 2016

- January 2016

- December 2015

- November 2015

- October 2015

- September 2015

- August 2015

- July 2015

- June 2015

- May 2015

- April 2015

- March 2015

- February 2015

- January 2015

- December 2014

- November 2014

- October 2014

- September 2014

- August 2014

- July 2014

- June 2014

- May 2014

- April 2014

- March 2014

- February 2014

- January 2014

- December 2013

- November 2013

- October 2013

- September 2013

- August 2013

- July 2013

- June 2013

- May 2013

- April 2013

- March 2013

- February 2013

- January 2013

- December 2012

- November 2012

- October 2012

- September 2012

- August 2012

- July 2012

- June 2012

- May 2012

- April 2012

- March 2012

- February 2012

- January 2012

- December 2011

- November 2011

- October 2011

- September 2011

- August 2011

- July 2011

- June 2011

- May 2011

- April 2011

- March 2011

- February 2011

- January 2011

- December 2010

- November 2010

- October 2010

- September 2010

- August 2010

- July 2010

- June 2010

- May 2010

- April 2010

- March 2010

- February 2010

- January 2010

- December 2009

- November 2009

- October 2009

- September 2009

- August 2009

- July 2009

- June 2009

- May 2009

- April 2009

- March 2009

- February 2009

- January 2009

- December 2008

- November 2008

- October 2008

- September 2008

- August 2008

- July 2008

- June 2008

- May 2008

- April 2008

- March 2008

- February 2008

- January 2008

- December 2007

- November 2007

- October 2007

- September 2007

- August 2007

- July 2007

- June 2007

- May 2007

- April 2007

- March 2007

- February 2007

- January 2007

- December 2006

- November 2006

- October 2006

- September 2006

- August 2006

- Older Archives

Resources & Links

- The Letters Prize

- Pre-2007 Victor Niederhoffer Posts

- Vic’s NYC Junto

- Reading List

- Programming in 60 Seconds

- The Objectivist Center

- Foundation for Economic Education

- Tigerchess

- Dick Sears' G.T. Index

- Pre-2007 Daily Speculations

- Laurel & Vics' Worldly Investor Articles